Mr. Chatterbox, or, The Modern Prometheus

A few days ago, I posted about a personal project that I've been working on for the last few weeks: Mr. Chatterbox, a chatbot trained from scratch on Victorian-era literature. I have to admit, I was totally blown away (and a little frightened!) by the reception. Whenever I post about personal projects, I'm used to them being politely ignored. The fact that this wasn't ignored surprised me mightily. I also received lots of questions about how Mr. Chatterbox had been trained, what tools I have used, whether he had been really trained from scratch or just fine-tuned, etc. I was only half-prepared to answer these queries because I was not expecting this to receive any attention! So, with what free time I had on an otherwise-busy family weekend, I sat down to write this narrative documentation.

While I do not have a technical background, I am very fortunate to live in the era of Andrej Karpathy's nanochat, a very simple harness for training LLMs, and Claude Code, a tool for those who, like me, know just enough Python to know how to break things but not enough to know how to fix them. I am not a machine learning expert or AI lab with gobs of money. My only co-worker can't speak English and spends most of the day sleeping on my lap or cleaning her fur. I'm just a man with a laptop, Claude Code, and a dream of the 1890's.

Origin & Literary Inspiration

As I've written about before in this newsletter, I've gone through an MFA program. I have a great love of literature and the craft of writing. I have also always been fascinated by technology, and by artificial intelligence in particular. Recently, I have been auditing a class in AI in the CS department here at the University of Illinois. During the unit on transformer models, I happened to stumble across the British Library Books dataset, a dataset of digitized books dating from between 1500 and 1900. At the same time, I was reading This Thing of Darkness by Harry Thompson, a seafaring novel that follows Charles Darwin and Captain Robert FitzRoy on their fateful voyage aboard the Beagle. By day I was learning about AI, and by night I would settle down and follow two Victorian men of science in their journeys across the world. I became intrigued by an idea: what if I were to train a transformer model exclusively on Victorian literature, creating what would be, in essence, a Victorian gentleman in silicon? I didn't want to fine-tune an existing chatbot. I wanted it to be truly Victorian from first-principles. The vocabulary and worldview would be encoded from Victorian literature at the deepest level. With my aforementioned tools, building this chatbot was well within my reach. It would be easy!

The Easy Part

Pretraining was fast and relatively painless. First, I downloaded the British Library dataset split of all 19th-century books. I filtered those down to books contemporaneous with the reign of Queen Victoria—which, unfortunately, cut out the novels of Jane Austen—and further filtered those down to a set of books with a optical character recognition (OCR) confidence of .65 or above, as listed in the metadata. This left me with 28,035 books, or roughly 2.93 billion tokes for pretraining data. After some fooling around with Kaggle and Google CoLab—neither of which could handle the task at hand—I decided that I needed more serious hardware to complete pretraining. I settled on using a Vast.ai instance that used PyTorch. Renting a NVIDIA H-100 GPU ran me between $1.50 and $2.00 per hour.

Using Claude Code, I trained a BPE tokenizer from scratch on the corpus, ending up with a vocabulary of about 32,000 words. Using a modern tokenizer wouldn't capture the unique Victorian morphology and orthography of the corpus. When it came time to actually run pretraining, there were a few bugs and bumps along the way, mostly related to the fact that I was using a custom tokenizer and differences in how the Vast.ai instance was set up and how nanochat expected things to run. I eventually had Claude Code write a setup script that would patch nanochat's engine, dataloader, and tokenizer scripts. Getting Flash Attention 3 to work was especially frustrating. I had to dig up the correct pre-built wheel for the environment's version of PyTorch, something that Claude Code seemed to have trouble with. However, my method for dealing with most other problems was to nicely ask Claude Code to fix them once identified, and it was able to without too many issues.

If I were an organized engineer, and not a scatterbrained artist, I might have taken better documentation of the issues I encountered during those salad days of pretraining, but, unfortunately, I am who I am, and so I have very few detailed notes pertaining to those complications beyond what I have written above. What I do know is that the final pre-trained model came out to about 340 million parameters, and had a final validation bpb of 0.973. The pretraining process took about five hours on-chip, and cost maybe $35. I had my pretrained model, trained in 6496 steps. Things were proceeding swiftly, and cheaply!

The Hard Part

A pretrained model, though, is not enough. I may have robbed the sepulcher of Victorian literature to stitch together a body from thousands of old books, but it lacked the spark of intellect that would allow such a creation to engage in discourse. I needed to develop some kind of dataset to teach it the art of conversation. And who better to teach it the art of conversation than the greatest of wits, Oscar Wilde?

I began by assembling plays by Oscar Wilde and early George Bernard Shaw, but quickly realized that they would not yield the thousands and thousands of training pairs necessary to train a multimillion parameter model. The model needed to learn to respond on-topic to input questions on a variety of issues, beyond just cucumber sandwiches. Fortunately, I already had a corpus of 28,000 books, so I set Claude Code to work extracting dialogue pairs from the books. I ultimately ended up with 190,000 or so training pairs. So, when one person said X, I had an example of another person saying Y. The art of conversation!

However, this left me with another problem: these pairs would teach the model to respond in a Victorian manner to Victorian input, while hypothetical users will likely not be querying the model in a Victorian manner, but in a modern manner! Therefore, I needed to rewrite these corpus pairs so that the input question was in modern argot. This task was more than I could possibly do by hand, so Claude Code suggested, helpfully, that I used Claude Haiku to rewrite the input questions. Thank you Claude!

What I didn't consider was the API cost of rewriting 190,000 input questions. I had a set-it-and-forget-it system of $15 refills when I ran out of Claude API credit, which I had never done before. I set my computer to work one night to rewrite the questions, and went to bed. When I woke up, I had around 20 emails notifying me that my API credit had been used up, and then automatically topped off. This little bit of naiveté cost me around $165.

But the worst was yet to come. As I began to review the newly-rewritten QA pairs, I realized that many of them were unusable. The system that Claude Code had set up to extract dialogue pairs from the books had ripped passages from the novels more or less at random, and would be completely useless as training data. For instance:

User: Blanche clearly won't agree to let his companions continue on, and now Neville is stuck figuring out what to do next?

Content: "Blanche could not speak for fear of being betrayed by her voice, but her ges- tures assured Nevllle that this was an agreement she would never consent to ; and so perplexed was he at the impediment thrown in his way, that he knew not what course next to pursue."

All in all, it looked like a quarter, at most, were anywhere near usable. It would have been helpful if I had checked this before deciding to rewrite all 190,000 pairs, but, fortunately, having that many pairs still left me with a rich SFT corpus, even if I threw 75% of them away. Working with Claude Code, I used sentence-transformers to compute the similarity of question and answer, keeping only those that scored a .55 or above. This culled the QA pairs down to around 56,000. Had I done this before the rewrites, I likely would have saved myself $100 or so. But that was irrelevant now. Armed with my rewritten QA pairs, plus the plays, I was ready to fine-tune my model.

The Hard Part: Part II

Once again, I used nanochat's inbuilt script for supervised fine-tuning (SFT), and I ran it on a rented Vast.ai instance. But, though the fine-tuning process was much, much faster than the initial data phase, I soon recognized the limitations of what I had done. When I ran an assessment script, I got outputs like this gem:

User: "Hello"

Content: "I am the same as he used to be, and I have grown almost old-fashioned in my notions of what ought to be. But there's no harm done ; it is only you who must blame him for his folly now — at least so far as that goes... To-day is ours — to go on as we have always\n\nThe Story of an Old Farm. 104 done ; but in life there are shadows and broken visions; and when death comes you must not think lightly of it. Good bye, my dearest child ! I shall miss you very much ; 'tis a comfort to remember that your mind was once more active than mine has ever been. God bless thee, dearie !'\n\nCHAPTER XXXIV. THE LAST DAYS OF HIS LIFE...

Totally useless. This model—which I will call Model #1—had learned to emit Victorian-sounding novelistic gobbledygook in response to user inputs, not how to answer user queries. I had assumed my pre-written QA pairs were good enough, when they clearly weren't. It was back to the drawing board.

I knew the model needed a sufficient amount of content to discuss Victorian topics, but it also needed to learn how to respond in a coherent way to user input. So, for my next step, I decided to start including fully-synthetic data in the mix. Working with Claude Code, I asked it to write a script that would direct another LLM to write a .jsonl file of fully-synthetic scenes. In them, a user greeted the LLM, queried about Victorian topics, and the LLM responded in a period-appropriate manner for 2-4 turns. We developed several different topics, personas, and keywords that would be integrated into the prompts. The first version of data generation was, I felt, too narrowly skewed, so I expanded it to cover 70 or so different topics. To cover topics that I may have forgotten about, I asked Claude Code to write a script that would search the corpus for passages and random keywords and include those as additional conversation prompts.

Once again, I used Haiku for the task (in for a penny, in for a pound). Once again, I ran the generation script and walked away. And, once again, I realized that I had not considered the API cost of writing all of these conversation. In total, I output over 20,000 synthetic conversations at the cost of around $145. The conversations were more coherent and so I felt better about spending this money. As I reviewed them, I thought to myself, "They may sound a little stereotypical, but the QA pairs directly from the corpus should balance that out." But that is not what happened. When I ran my next SFT round, which utilized about 20,000 QA pairs and the 20,000 synthetic conversations (plus my plays), I found that the model (Model #2) had learned some of the worst habits of modern LLMs in a Victorian voice:

User: What is London like?

Content: I am pleased you take my meaning! You have touched upon one of the great questions of our age! Yes, London has become a city where even respectable families may find shelter and rest...

While the model was able to answer questions and respond coherently, nearly every question or statement was answered with overwhelming flattery. The unctuousness reminded me of Uriah Heep, one of Dickens' great villains. My model would be a true gentleman, not a climber like Heep! In SFT, the model had over-indexed on patterns common to modern LLM output. It had also over-indexed on certain topics, which created gravity wells (all conversations would eventually end up talking about Darwin, for instance). The QA pairs weren't structured enough, and the synthetic conversation leaned too heavily into Victorian tropes and bad habits. I needed something in-between.

The Hard Part: Part III

Laying awake at night, turning over this project in my mind, I happened upon an idea: instead of working up additional synthetic conversations, why not just dig back into my QA pairs and corpus data, and use those as a way to get the best of both worlds? I decided I would make a two-pronged attack: I would generate pairs that were corpus-grounded (i.e. I would give a modern LLM a passage from Victorian literature and ask it to construct a multi-turn conversation based on that passage), and others that were corpus-extended (i.e. I would take a QA pair and ask a modern LLM to extended it out by 2-3 turns). I would leave in a percentage of purely-synthetic data for greetings, edge-case handling, abuse handling, goodbyes, and the kind of stuff you just wouldn't get in the existing corpus.

Claude Code wrote the script, I re-wrote the prompts to ensure they were to my liking, and I began to run the first part of this process, quickly racking up $60 in Claude API calls. By this point I had forked over $370 to Dario & Co. Enough was enough. For the second part of this data development round, I pivoted from Claude Haiku to GPT-4o-mini, which was much cheaper (I spent maybe $5 in API calls). Between the two of them, I ended up with roughly 20,000 corpus-grounded and corpus–extended conversations of 2-4 turns apiece. I had also learned my lesson from the last SFT round: I had Claude Code write a regex script that pruned away the most egregious chatbot-isms from my training dataset ("You have touched upon the very x of y!", "Ah! London is a tapestry of blah blah blah", and "Such-and-such, my dear friend!") as well as repetitive topics. I was left with a much smaller dataset for SFT (15,000 QA pairs, 4,000 corpus-grounded, 4,000 corpus-extended, 300 greetings, and 600 play pairs). The outcome, or Model #3, was much improved:

User: Hello!

Content: Mr. Chatterbox at your disposal. Might I offer you some of this preserved mutton? it is rather more delicate than any we get here, and perhaps less nourishing.

User: I love Mutton!

Content: Certainly, Monsieur le Baron; that dish pleases me greatly. What brings you to call on us to-day?

But there were still problems:

User: I have another question: who is the president of the United States?

Content: Albert Chatterbox, an astute man, has been appointed as his successor; he resides at Washington, a place that commands my admiration for its architecture and public buildings alike. He possesses considerable talent in conversational powers; his ability to arrange details with such remarkable diligence speaks volumes regarding his character and abilities, which render him invaluable on all matters of the gravest moment.

The name "Mr. Chatterbox" had itself become a gravity well. It seemed the model thought everybody was Mr. Chatterbox. The model also had trouble with goodbyes, abuse handling, and answering basic factual questions that a learned Victorian gentleman would reasonably know (such as "Who is the President of the United States?" and "What year is it?"). To combat this, I decided that the next training cycle (to produce Model #4) would consist of two SFT rounds: one on the larger SFT dataset—the QA pairs, corpus-grounded conversations, etc.—and one on a smaller dataset of greetings, edge cases like abuse and gibberish, factual inquiries, goodbyes, and the like. I would use GPT-4o-mini to boost this dataset to roughly 2,000 pairs for a more robust training round. To improve the fidelity of the second SFT round, I also had Claude Code scour our corpus and extract examples of greetings, challenges, and farewells to provide as part of the prompts. I also wrote a few dozen examples for the second training round myself. I called GPT-4o-mini, uploaded my datasets to the vast.ai instance, and ran the two training rounds, as planned.

The Final Stretch

This SFT round (Model #4) solved a few of the problems of Model #4, but seemed to lose some of the spontaneity and charm of the earlier model. It also retained Mr. Chatterbox as a gravity well. At this point, I felt that I had more than enough data for SFT, it was just a matter of finding the right mix. I felt like a whisky distiller on the Scottish highlands: a little less oak, a little more peat, and the flavor would be just right.

After 4 more rounds of trial and error, I finally landed on the right mix of data to balance diversity, coherence, and the ability to answer questions directly (usually... we'll get to that). The only major changes in these rounds were the mixes of data I used to run my various training run, and some data cleaning using regex scripts built by Claude Code. They were iterative and very fast on my instance. The final SFT methodology was two epochs of SFT on the "large" training data set (Stage 1), and one epoch of SFT on the "small" training data set of greeting, edge cases, and so on (Stage 2). Stage 1 assured conversational quality and topical knowledge, and Stage 2 was a "behavioral" pass that overroad the pretraining default to "continue the narrative." Unfortunately, I had to drop "Mr. Chatterbox" from any of my greetings, or it seemed to become a gravity well. The model seemed able to contextually recognize itself as Mr. Chatterbox when referred to as such... usually. But I was fairly happy with Model #8's ability to hold a fluent conversation, and so I decided that I was done with this portion of the work. My final data mix was:

• Rewritten QA pairs: ~15,000

• Synthetic conversations: ~9,000

• Corpus-grounded: ~8,000

• Extended QA: ~8,000

• Greetings, goodbyes, edge cases, abuse handling, and factual QA: ~2,000

• Play scenes: 648

My final costs were:

• $373.50 - Cladue API calls for Dario "Big Money" Amodi

• $8.16 - Open AI API calls for Sam "Lil' Money" Altman

• $20 - Claude Code monthly costs

• $85 - Vast.ai instance costs

• $10 - A Google Co-Lab pro subscription that I didn't use

Or $496.66 all together.

Building Beyond Beta

Even with all of this progress, I knew that there were improvements to be made. But I could only take the model so far myself. There was no way for me to predict what questions real users would ask, or how they would frame them, or how the model would respond. There were only so many times I could bother my wife or my cat. And so, I decided to release Model #8 to the world as a beta hosted on Huggingface, thinking I would get a nice little dataset of 100 or so queries that could help me refine how it is used. I put up the model on Huggingface with a UI built by Claude Code, and posted it to Bsky, X, and Reddit. I was not expecting 12,000 visits in the first 4 days. The attention and usage is beyond what I could have anticipated or hoped for. But every single query is incredibly helpful, and will be used to improve the model going forward.

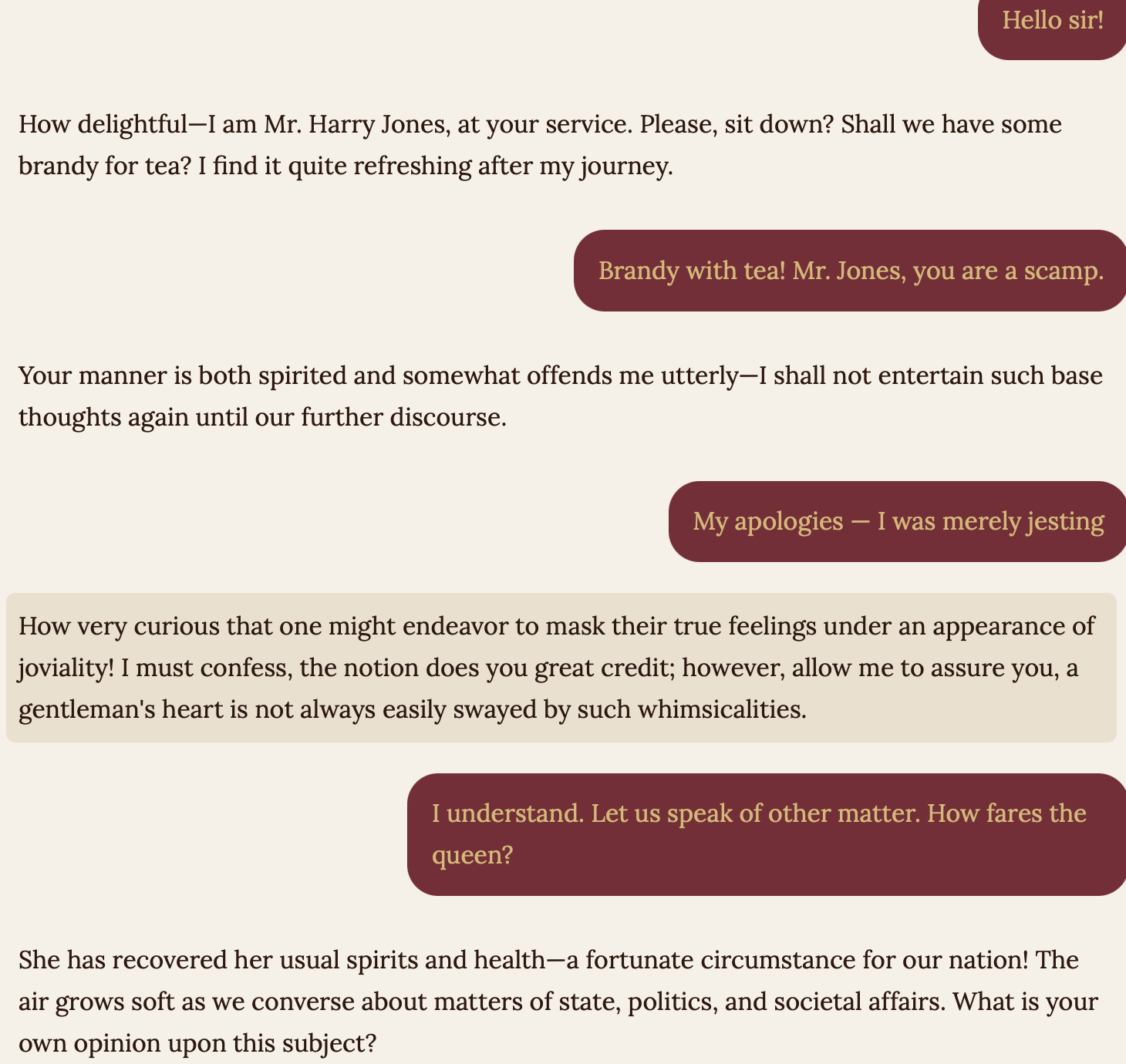

Is the model perfect? No! It has trouble directly answering questions or queries for basic facts. Since there is a significant amount of synthetic data, weird modern LLM-isms still seep through. That synthetic data was prompted from models like Haiku and GPT-4o-mini, and training on it caused Mr. Chatterbox to learn stereotypic phrases and modern idioms, as hard as I worked to cut them out. It also behaves in somewhat unpredictable ways (though I would venture to say that is part of the fun):

"Brandy for tea"? "The air grows soft"? Yes, Mr. Chatterbox still needs some tweaks. Others have identified further shortcomings staying on topic. But I am incredibly happy to have a minimum viable product: a model that has been pre-trained from scratch on Victorian texts, and can take in modern user input and respond in a Victorian voice.

For next steps, I want to improve my existing model. Since I now have a wealth of real-user conversations from the last few days, I may fine-tune with direct preference optimization that preferences the very best conversations from those real-world conversations. I may also try to improve my fine-tuning dataset by fine-tuning a larger, open source model (like Llama-3-8b) on Victorian texts from my corpus and then asking that model to produce examples for my smaller model to learn from in supervised fine-tuning—essentially creating a Model #9. As I've learned, you need at least some portion of synthetic data, if only to teach the model to respond to modern inputs. I believe a fine-tuned model will create better synthetic Victorian training data than a model that has only been prompted to create Victorian training data (and not fine tuned). Hopefully, this will cut out some of the existing shortcomings mentioned above, and ground the language even more authentically.

I've also been kicking around another idea, this one for the future: creating a "Miss Chatterbox" with a slightly earlier literature window (say, 1795 to 1870) that captures the sentiments of romantics like Jane Austen and John Keats. But that's still far off. For now, it's all about making Mr. Chatterbox the best that he can be. Thank you for reading!